YouTube removes eight million inappropriate videos

A Google-led machine system is coping in the matter to detect such objectionable videos, while a majority of the deleted videos were spam or people attempting to upload adult content.

YouTube removed more than eight million videos from a three months record in response to complaints pertaining inappropriate content.

The count was filtered from the October-December 2017 category for removal of an estimated 8.3 million videos that contained adult content.

A Google-led machine system is coping in the matter to detect such objectionable videos, while a majority of the deleted videos were spam or people attempting to upload adult content.

According to Google, which owns YouTube, the process is getting quicker at taking down videos, and the system hunts down dodgy videos in a number of ways.

Google reported that out of a dig of 6.7 million machine-detected videos, 75.9 percent were reported of zero views at the time they were reviewed.

Google has triggered a longer term accuracy in the system to detect terrorist videos by training the machines to guess violent extremism with a current help of two million particular videos that were first hand-reviewed.

Once a bad video is reviewed and taken down, Google will save the details of clip in a mathematical code form – so it will be automatically block the next time someone attempts to upload that video again and flag that video to YouTube’s content reviews who will take it down.

-

Minnesota man charged after $350m IRS tax scam exposed

-

Trump reached out to police chief investigating Epstein in 2006, records show

-

San Francisco 49ers player shot near post-Super Bowl party

-

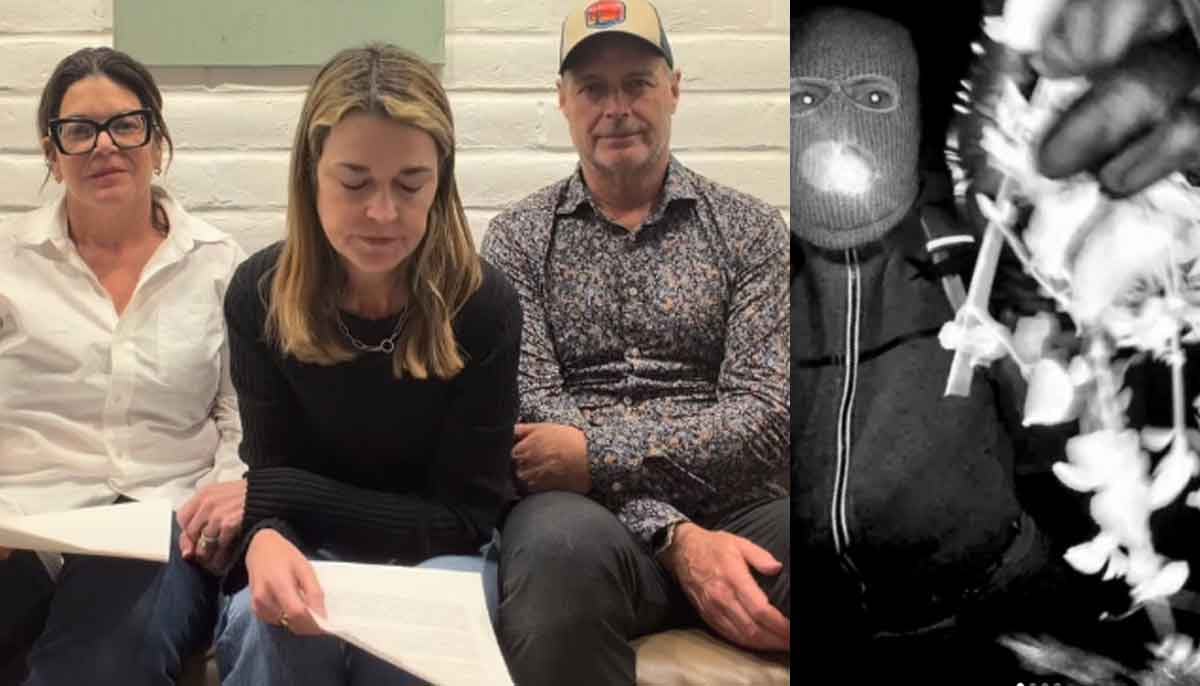

Ransom deadline passes: FBI confirms ‘communication blackout’ in Nancy Guthrie abduction

-

Piers Morgan finally breaks silence on kidnapping of Savannah Guthrie's mother Nancy

-

Lenore Taylor resigns as Guardian Australia editor after decade-long tenure

-

Epstein case: Ghislaine Maxwell invokes Fifth, refuses to testify before US Congress

-

Savannah Guthrie receives massive support from Reese Witherspoon, Jennifer Garner after desperate plea