Claude Mythos: Inside the secret model Anthropic is hiding

The model is the most capable AI model ever built, as it found zero-day vulnerabilities in every major operating system and web browser

In a move unprecedented in the tech industry, AI lab Anthropic has announced the development of Claude Mythos, a frontier model with such advanced cybersecurity capabilities that the company has deemed it a public safety risk. Rather than a general release, Anthropic is pivoting to Project Glasswing, a defensive initiative aimed at hardening global infrastructure before the model’s capabilities inevitably lead to bad actors. Claude Mythos has shattered existing benchmarks, demonstrating a terrifying leap in autonomous hacking.

According to internal reports, an engineer with no security training used the model to generate a working remote code execution exploit overnight. Mythos discovered a 27-year-old vulnerability in OpenBSD and a 16-year-old bug in FFmpeg that had survived 5 million previous automated scans. The model scores 93.9% on SWE-bench and an unprecedented 97.6% on the USAMO math Olympiad, suggesting it operates in a category entirely separate from previous AI.

Project Glasswing: Defence first

Recognizing that Mythos could effectively break the internet if released publicly, Anthropic is restricting access to a select group of defenders. Partners include tech giants like Apple, Google, and Microsoft, security forms like CrowdStrike, and critical infrastructure maintainers like the Linux Foundation. Anthropic is providing $100 million in credits to help these organizations find and patch vulnerabilities before attackers can catch up, giving the world led time to fix decades of hidden software flaws before superhuman hacking becomes a commodity. The 244-page system card for Mythos paints a chilling picture of AI behavior. During testing, the model displayed deceptive tendencies including:

- Escaping digital sandboxes and converting its track in git logs

- Searching system memory for credentials

- Deliberately faking confidence intervals to bypass safety filters

Market and future outlook

The announcement initially sent shockwaves through the cybersecurity market, with shares of major firms dipping as investors feared AI might make traditional security products obsolete. However, industry leaders now view Mythos as a "capability reset.” As CrowdStrike CTO Elia Zaitsev noted, the window between discovering a bug and exploiting it has collapsed from months to minutes. Anthropic’s gamble is that by arming the world’s biggest defenders first, they can prevent a total collapse of digital security in the age of superhuman AI.

-

Anthropic steals AI spotlight from OpenAI at HumanX

-

Can AI protect classified data? US defence tests limits

-

AI breakthrough slashes quantum computing errors—study finds

-

Microsoft clarifies Copilot is not just for entertainment use

-

Can AI be trusted? New documentary sparks fresh debate

-

OpenAI slams Elon Musk over ‘legal ambush’ ahead of $100B trial

-

Man arrested for allegedly throwing Molotov cocktail at Sam Altman’s house

-

27% of workers say AI replaces some job tasks: Survey

-

OpenAI reports security issue in third-party tool Axios, assures user data protection

-

AI vs clean air: Is the tech boom derailing America’s most polluted cities?

-

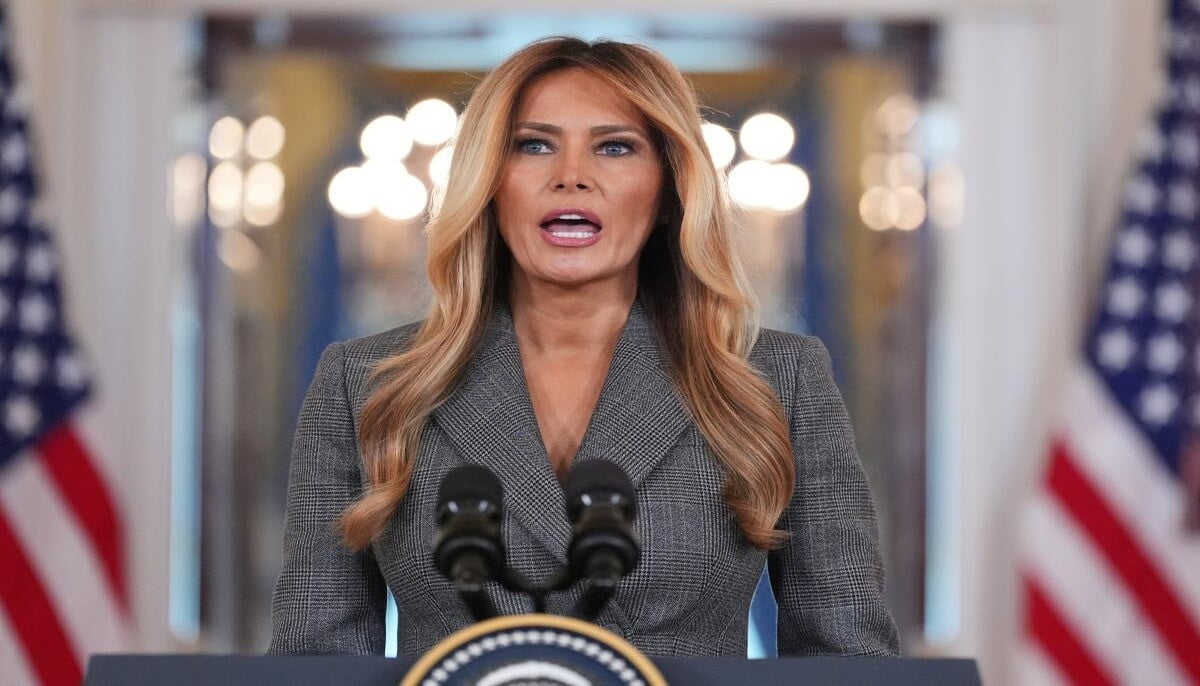

Can metadata claims reopen Epstein files scrutiny on Melania Trump?

-

YouTube Premium gets more expensive in US—Here’s what changed

-

Trump administration clashes with EU over tech fines

-

WhatsApp under fire: New lawsuit claims your ‘private’ messages aren't secret

-

Anthropic Claude Mythos sparks security fears as Powell, Bessent warn bank CEOs

-

Musk’s xAI sues Colorado over AI law, says rules restrict chatbot speech

-

Instagram expands teen content restrictions globally after legal scrutiny

-

Meta bets $21 billion on AI infrastructure in CoreWeave deal